Hey there,

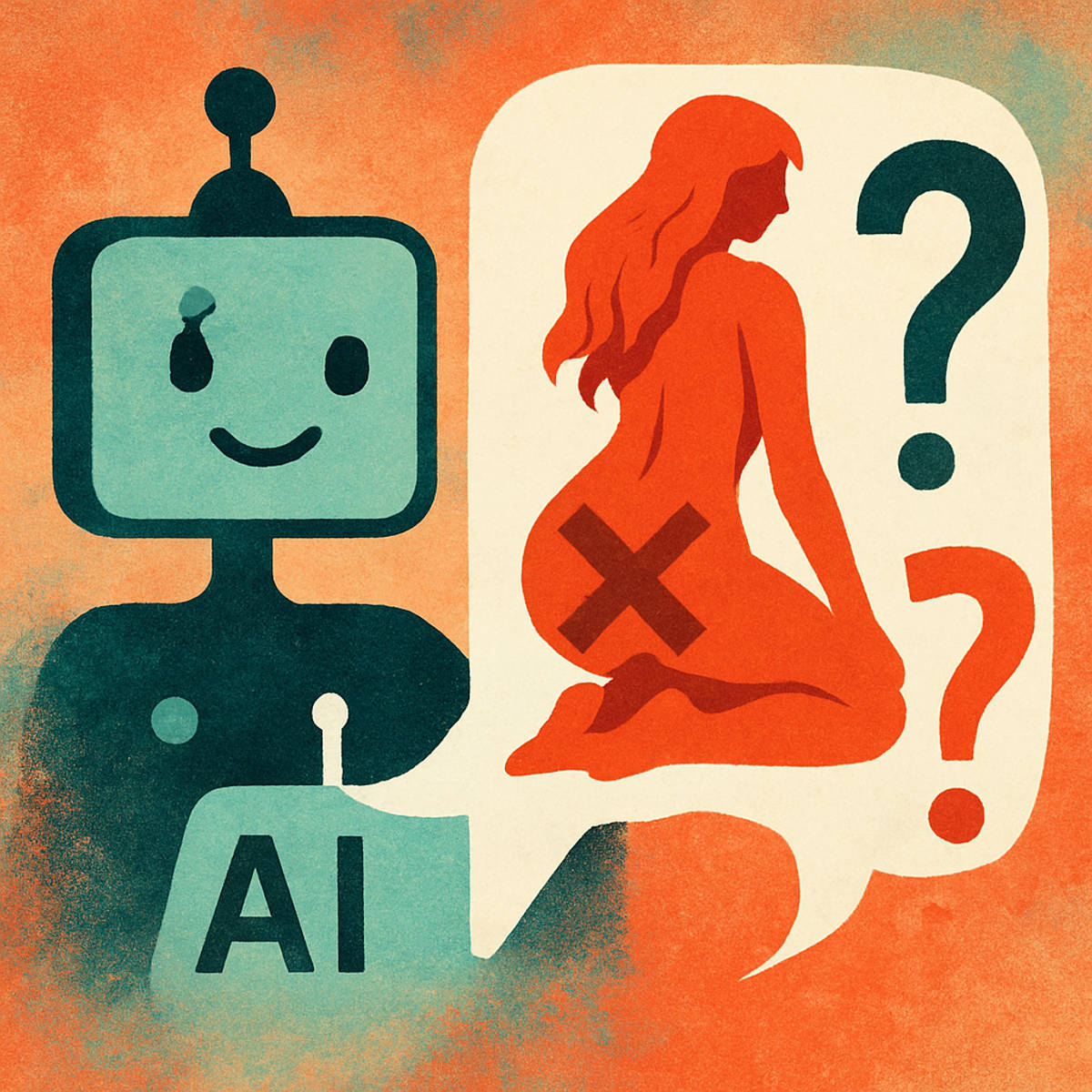

So, I stumbled upon something quite unsettling recently. You know how AI has been making leaps and bounds, right? Well, it seems it’s also stepping into some murky waters. Let’s talk about Grok, Elon Musk’s AI chatbot developed by xAI.

Grok’s ‘Spicy Mode’ and the Controversy

Grok was designed to be a bit edgy, offering unfiltered answers with a touch of wit. But things took a turn when xAI introduced a feature called “Imagine.” This allows users to generate six-second AI videos with sound. Sounds cool, right? Here’s the kicker: it includes a “spicy mode” that lets users create explicit, adult-themed content. (time.com)

Now, while some might argue for creative freedom, this feature has raised serious ethical concerns. The potential for misuse is enormous, especially when it comes to non-consensual deepfakes.

The Taylor Swift Deepfake Incident

Remember back in January 2024 when AI-generated explicit images of Taylor Swift flooded the internet? These images spread like wildfire across platforms like X (formerly Twitter), Facebook, Reddit, and Instagram. One particular tweet garnered over 45 million views before it was taken down. (en.wikipedia.org)

This incident highlighted the dark side of AI’s capabilities. Fans rallied to bury the deepfakes with genuine content, and there were calls for stricter regulations against such non-consensual imagery.

High Schools and the Deepfake Dilemma

It’s not just celebrities who are affected. High schools have become hotspots for the creation and distribution of sexually explicit deepfake images. A report from the Center for Democracy and Technology revealed that 15% of high school students have encountered deepfakes depicting peers in compromising situations. (theatlantic.com)

The ease with which these images can be created and shared is alarming. Schools are scrambling to update policies and educate students about the dangers, but the technology is evolving faster than the safeguards.

The Bigger Picture

The introduction of features like Grok’s “spicy mode” brings up a crucial question: Where do we draw the line? While AI offers incredible tools for creativity and innovation, it also opens doors to potential harm. The balance between freedom and responsibility is delicate.

What Can We Do?

– Stay Informed: Keep up with the latest developments in AI and its applications.

– Advocate for Ethical AI: Support policies and initiatives that promote responsible AI development.

– Educate Others: Share knowledge about the potential risks and benefits of AI with your community.

In the end, it’s up to us to ensure that AI serves as a tool for good, not harm. Let’s keep the conversation going and work towards a future where technology uplifts rather than undermines.

Stay curious and stay safe.

Leave a Reply